Inception

Developments in the context of Open, Big, and Linked Data have led to an enormous growth of structured data on the Web.

To keep up with the pace of efficient consumption and management of the data at this rate, many Data Management Solutions have been developed.There exists many efforts for benchmarking these domain specific DMSs, however,

- reproducing these third party benchmarks is an extremely tedious task, and

- there is a lack of a common framework which enables and advocates the extensibility and reusability of the benchmarks.

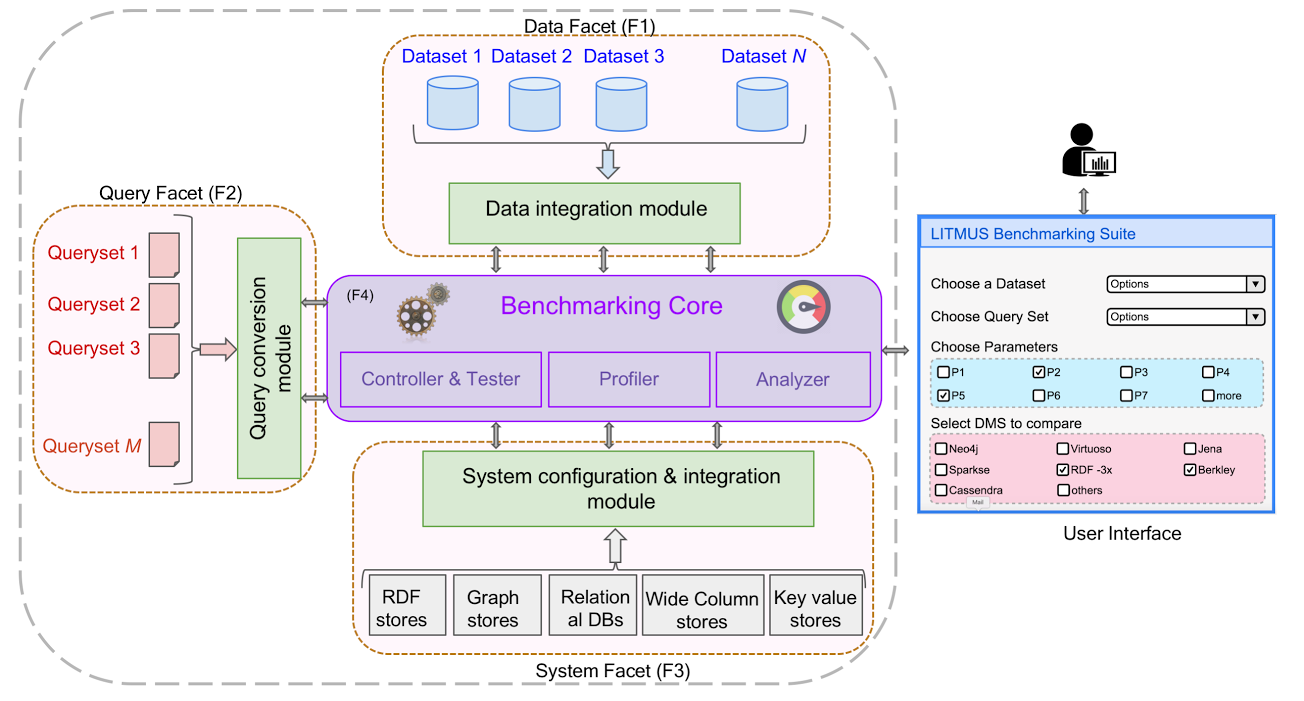

LITMUS will go beyond classical storage benchmarking frameworks by allowing for analysing the performance of DMSs across query languages.